AI Roadmap Readiness: You Need a Sequence, Not More Tools

Most organizations do not fail AI initiatives because of low effort. They fail because they execute in the wrong order. The typical pattern is familiar: tool purchases happen...

Most organizations do not fail AI initiatives because of low effort. They fail because they execute in the wrong order.

The typical pattern is familiar: tool purchases happen early, pilots launch in parallel, and teams stay busy for months without one production workflow that is clearly faster, cleaner, or more reliable.

That is not a tooling problem. It is a sequencing problem.

The hard part is not discovering possibilities. It is deciding what to do first, what to postpone, and what to reject.

Why sequence beats activity

Modern tools can summarize, extract, classify, route, draft, and orchestrate across systems you already use. Yet many programs stall because capability outpaces operating discipline.

Without sequence:

- effort disperses across low-impact pilots

- teams optimize local tasks but not end-to-end flow

- governance arrives late and slows everything down

- leadership cannot distinguish progress from motion

With sequence:

- one workflow gets materially better in production

- ownership is clear

- metrics become comparable

- expansion decisions become evidence-based

The readiness problem most teams miss

Teams often ask, "Which tool should we buy?" before answering:

- Which workflow has the highest operational drag?

- Which part of that workflow is actually automatable now?

- What quality threshold is acceptable for production?

- Who owns cross-functional decisions when trade-offs appear?

If these are unresolved, additional tooling usually adds complexity, not speed.

What a strong readiness audit should deliver

A good readiness pass is short, concrete, and decision-oriented. It should produce:

1) Workflow priority order

A ranked list based on impact, feasibility, and implementation risk.

2) Baseline operating metrics

Current cycle time, error/rework rate, throughput, and manual effort.

3) 90-day execution lane

One scoped initiative with milestones, owner, and explicit success criteria.

4) Governance and exception model

Rules for confidence thresholds, human review, escalation paths, and auditability.

Without these outputs, implementation tends to fragment by department.

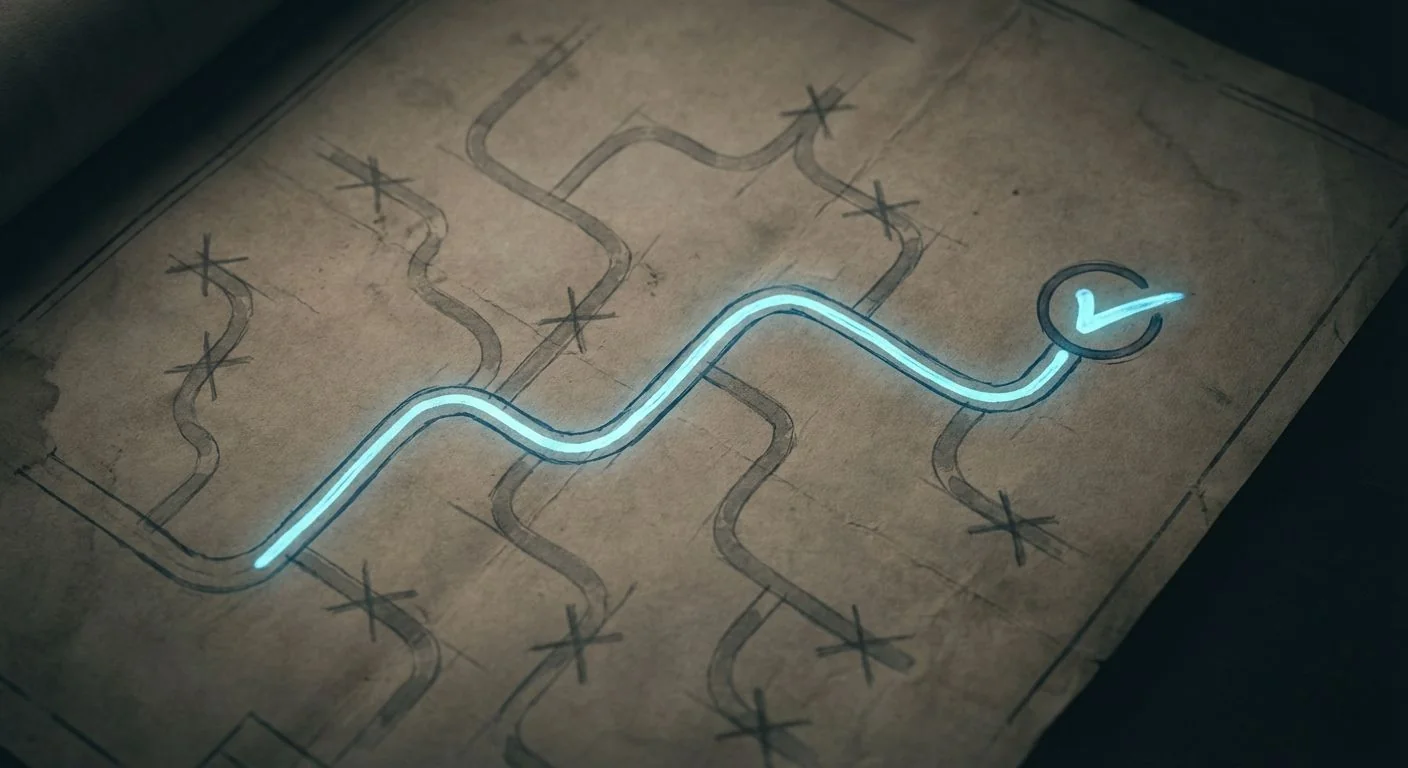

A practical sequencing model

Use this four-step approach.

Step 1: Diagnose friction

Map where time, rework, and handoff failures occur in the current process.

Step 2: Choose one lane

Pick one workflow where value is high and dependencies are manageable this quarter.

Step 3: Define the control model

Document where human review is required and how low-confidence outputs are handled.

Step 4: Run and review

Ship quickly, review biweekly, and decide expand/hold/adjust based on metric movement.

This replaces "pilot theater" with repeatable execution.

Common sequencing errors

Error 1: Starting with the broadest problem

Large cross-functional transformations fail when first initiative scope is too wide.

Error 2: Mistaking tool access for adoption

Usage statistics do not prove workflow improvement.

Error 3: No owner with authority

If one person cannot make process decisions across teams, velocity collapses.

Error 4: No definition of done

Projects continue indefinitely when target outcomes are not fixed at launch.

What leadership should ask every two weeks

- Did cycle time improve in the chosen lane?

- Did quality stay stable or improve?

- Did exception volume trend down?

- Is the owner unblocked on cross-team decisions?

- Are we ready to scale, or should we harden first?

This review cadence creates organizational learning and prevents random expansion.

Final takeaway

Most organizations do not need more AI capability. They need tighter operational sequence.

One lane. One owner. One measurable outcome.

Get that right, and the roadmap becomes execution, not aspiration.