Most AI Projects Fail Before Implementation Starts

The biggest blocker is usually prioritization, not budget. An audit-first sequence turns AI from tool sprawl into measurable operational outcomes.

Most AI projects do not stall because teams lack interest. They stall because teams start building before they decide what should be built first.

Across operators, one pattern repeats: many teams still do not know where to start, while only a small minority identify budget as the primary blocker. That gap explains why organizations can buy tools quickly and still feel stuck months later.

The failure mode is predictable:

- teams test several tools in parallel

- none are wired into the live workflow

- leadership asks for ROI proof before baseline metrics exist

- momentum drops because no one owns post-launch adoption

This is not a talent problem. It is a sequencing problem.

Why tool-first programs underperform

A tool can improve a task. It cannot fix unclear operating priorities.

When teams skip diagnosis, they often automate the wrong lane: low-impact tasks, high-friction dependencies, or workflows without clear owners. Early demo quality creates confidence, but production outcomes stay weak because the program was not anchored to operational constraints.

That is why “AI adoption” often becomes software activity instead of operating improvement.

Five questions that should come before build

Before implementation, leadership should require clear answers to five practical questions:

- Which manual workflow is costing the most time each week?

- Where does work queue up waiting on one person or team?

- Which use case has low complexity and visible impact?

- How will success be measured in 30-60 days?

- Who owns adoption and performance after launch?

If these answers are weak, adding more tooling usually increases noise, not value.

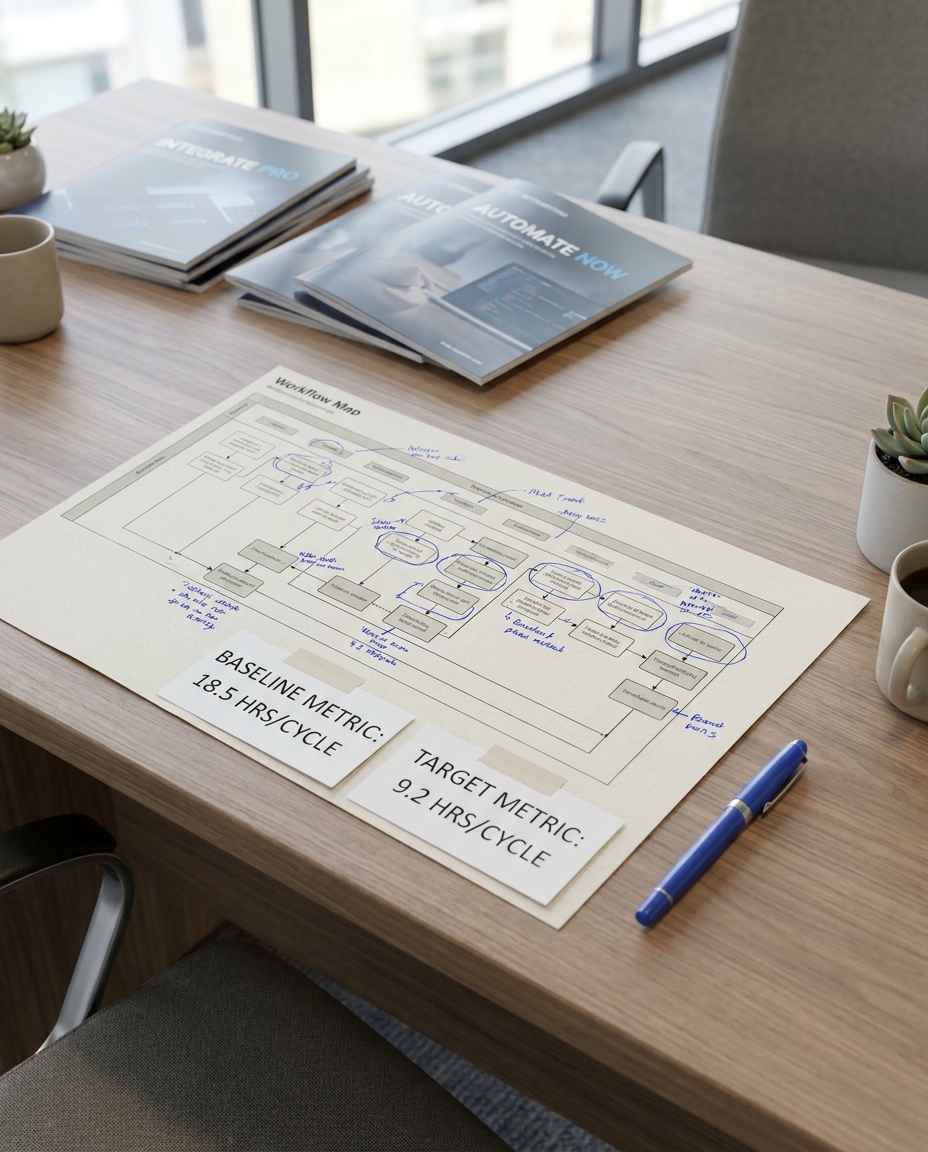

The audit-first sequence that works

A strong audit-first motion is short and decision-oriented:

1) Discovery

Map how work actually moves today, including handoffs, bottlenecks, and exception loops.

2) Prioritization

Rank three to seven opportunities by impact, feasibility, and dependency risk.

3) Pilot with production intent

Choose one lane with a named owner and measurable baseline.

4) Measure and refine

Track cycle time, error rates, and throughput in the first 30-60 days.

5) Scale by pattern

Expand only after one lane is stable, measurable, and governed.

This sequence reduces waste and creates reusable implementation logic.

What leaders should do this quarter

If your team believes in AI but execution keeps stalling, do not start with another vendor evaluation. Start with operational clarity:

- pick one workflow that clearly drains time or quality

- assign one accountable owner

- set one baseline metric and one target metric

- launch one lane with explicit exception handling

That is how AI becomes an operating system upgrade instead of another software expense.

Practical implementation scorecard

If you want this discipline to survive past kickoff, review one scorecard every two weeks:

- Priority clarity: Is there one lane, one owner, and one baseline metric?

- Integration readiness: Are handoffs mapped and system boundaries explicit?

- Governance maturity: Are exception rules and escalation paths documented?

- Adoption momentum: Are users following the intended lane or routing around it?

- Outcome signal: Is at least one metric moving in the right direction?

The goal is not perfect planning. The goal is fast learning with measurable control. A scorecard makes execution visible and keeps teams from drifting back into tool-first behavior.

Closing

Organizations do not need infinite experimentation. They need disciplined sequence.

When clarity comes first, implementation gets faster, risk gets lower, and outcomes become measurable. The teams that win this phase are not the ones testing the most tools. They are the ones choosing the right workflow first.